In a competitive market, delivering flawless software is no longer a luxury; it's the baseline for survival. Poor testing practices lead directly to buggy releases, frustrated customers, and a damaged brand reputation. To stay ahead, development and QA teams must integrate proven software testing best practices that embed quality into every step of the development lifecycle, not just tack it on at the end.

This guide provides a definitive roundup of the nine most impactful strategies that will elevate your quality assurance process. We will move beyond surface-level tips to provide actionable insights you can implement immediately. Forget generic advice; we're focusing on specific methodologies designed to prevent defects, reduce costly rework, and accelerate your time to market.

You will learn how to:

- Integrate testing from the very beginning with Shift-Left Testing and Test-Driven Development (TDD).

- Automate smarter, not harder, by applying the Test Automation Pyramid.

- Align development with user needs through Behavior-Driven Development (BDD).

- Prioritize your efforts effectively using Risk-Based Testing.

By mastering these techniques, you can build a robust quality culture that catches issues early, streamlines collaboration between developers and testers, and ultimately helps you ship superior products with confidence. This isn't just about finding bugs; it's about preventing them from ever reaching your users. Let's dive into the practices that will transform your approach to software quality.

1. Master the Cycle with Test-Driven Development (TDD)

Test-Driven Development (TDD) flips the traditional development process on its head. Instead of writing code and then figuring out how to test it, TDD requires you to write a failing test before you write a single line of production code. This approach, popularized by software pioneers like Kent Beck, forces clarity and intentionality into the development process from the very start. It ensures every new feature or fix is directly tied to a specific, testable requirement.

The core of TDD is a simple, repeating cycle that drives both quality and design:

- Red: Write a small, automated test for a new piece of functionality. Since the code doesn't exist yet, this test will naturally fail.

- Green: Write the absolute minimum amount of code required to make the test pass. The goal here isn't elegance; it's simply to satisfy the test.

- Refactor: With a passing test as your safety net, you can now clean up, simplify, and improve the code you just wrote without worrying about breaking its functionality.

This cycle is repeated for every new feature, resulting in a comprehensive suite of tests and cleaner, more maintainable code. Companies like Spotify and Microsoft have successfully adopted TDD to improve the reliability of their backend services and core products.

How to Implement TDD Effectively

Adopting TDD requires discipline, but the long-term benefits are substantial. Start by applying it to complex business logic where the requirements are clear but the implementation could be tricky. Avoid using it for simple code like basic getters and setters where the risk is low.

To make TDD a cornerstone of your software testing best practices, focus on these tips:

- Start Small: Begin with simple, focused tests that check one specific behavior.

- Be Descriptive: Name your tests clearly to describe what they are testing (e.g.,

test_user_cannot_login_with_invalid_password). - Keep Tests Independent: Each test should be able to run on its own without relying on others.

- Commit to the Cycle: The magic is in the discipline. Always write the test first.

2. Continuous Integration and Testing

Continuous Integration (CI) and Continuous Testing are foundational practices that automate the building and validation of code changes. Instead of developers working in isolated branches for weeks, CI encourages them to merge their changes into a central repository multiple times a day. Each merge, or "integration," automatically triggers a build and a suite of tests, providing immediate feedback on the health of the codebase. This approach, championed by pioneers like Martin Fowler and Grady Booch, catches integration issues and bugs early, making them faster and cheaper to fix.

The core principle of CI is to create a reliable, automated pipeline that keeps the main codebase in a constantly deployable state. When a developer commits code, the CI server automatically:

- Builds: Compiles the source code into an executable artifact.

- Tests: Runs automated unit, integration, and other tests against the build.

- Reports: Notifies the team immediately if the build or any tests fail.

This rapid feedback loop prevents the dreaded "integration hell," where merging large, conflicting changes becomes a major bottleneck. Tech giants like Google process millions of builds daily with their internal CI systems, while Netflix runs thousands of automated tests with every commit to maintain service stability. Atlassian even reduced their deployment times by 75% using a CI/CD pipeline, showcasing its power to accelerate delivery.

How to Implement CI and Testing Effectively

Adopting CI is a cultural shift that requires team commitment to maintaining a green build. It's most effective in agile environments where rapid iteration is key. The goal is to make small, frequent changes to minimize risk and improve visibility across the team.

To make continuous integration a key part of your software testing best practices, focus on these tips:

- Commit Small and Often: Encourage developers to integrate their work daily in small, manageable increments.

- Keep Tests Fast: The entire test suite should run in minutes to ensure rapid feedback.

- Fix Broken Builds Immediately: A broken build should be the team's top priority to prevent blocking others.

- Use Feature Flags: Merge incomplete features behind feature flags to avoid disrupting the main branch.

- Monitor and Optimize: Keep an eye on build times and test failures to identify and resolve bottlenecks in your pipeline. For more insights on improving your workflows, explore our guide on service delivery optimization.

3. Prioritize Efforts with Risk-Based Testing

Not all features carry the same weight. Risk-Based Testing (RBT) is a strategic approach that acknowledges this reality by prioritizing testing efforts based on the probability and impact of potential failures. Instead of trying to test everything equally, RBT focuses resources on high-risk areas of the application, ensuring that the most critical functionalities receive the most rigorous attention. This method, championed by industry leaders like James Bach and formalized in standards like ISO 29119, makes testing more efficient and effective.

The core idea is to identify which software components or features are most likely to fail or would cause the most severe damage if they did. For example, a banking application would prioritize testing payment processing and security features over updating a user's profile picture. Similarly, an e-commerce platform dedicates more resources to validating the checkout and payment workflows than to testing the "About Us" page. This practical focus is a key component of modern software testing best practices.

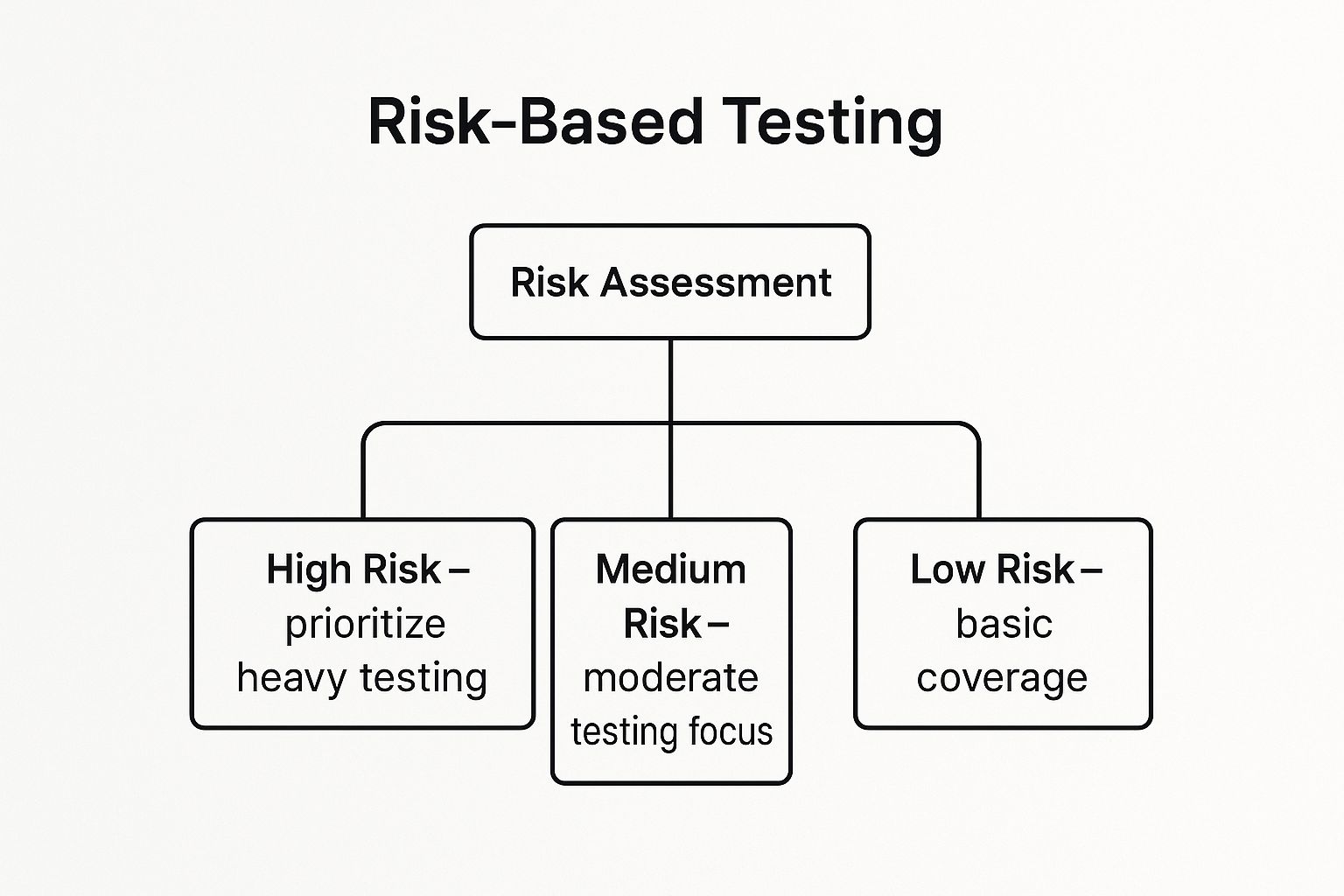

The following diagram illustrates how a risk assessment breaks down testing priorities into a simple hierarchy.

This hierarchy guides the allocation of testing resources, ensuring that high-impact areas receive exhaustive testing while low-risk features get basic coverage.

How to Implement Risk-Based Testing Effectively

To successfully implement RBT, you must align testing activities with business objectives. Start by identifying potential risks through brainstorming sessions, analyzing historical defect data, and consulting with both technical teams and business stakeholders. The goal is to create a shared understanding of what matters most.

To integrate this strategy into your quality assurance procedures, follow these tips:

- Involve Stakeholders: Collaborate with business analysts and product owners to identify business-critical functions.

- Use Historical Data: Analyze past bug reports and production incidents to pinpoint historically problematic areas.

- Update Regularly: Risk is not static. Re-evaluate your risk assessment as the product evolves and new features are added.

- Combine Perspectives: A comprehensive risk profile considers both technical complexity (e.g., integrations, complex algorithms) and business impact (e.g., revenue loss, reputational damage).

4. Build a Stable Foundation with the Test Automation Pyramid

The Test Automation Pyramid is a strategic framework that guides how to balance different types of automated tests for maximum efficiency and stability. Instead of relying heavily on slow and brittle user interface (UI) tests, this model advocates for a healthy distribution of tests across different layers of the application. Popularized by thought leaders like Mike Cohn, it prioritizes fast, reliable tests at the bottom and minimizes slow, expensive ones at the top, creating a more robust and maintainable test suite.

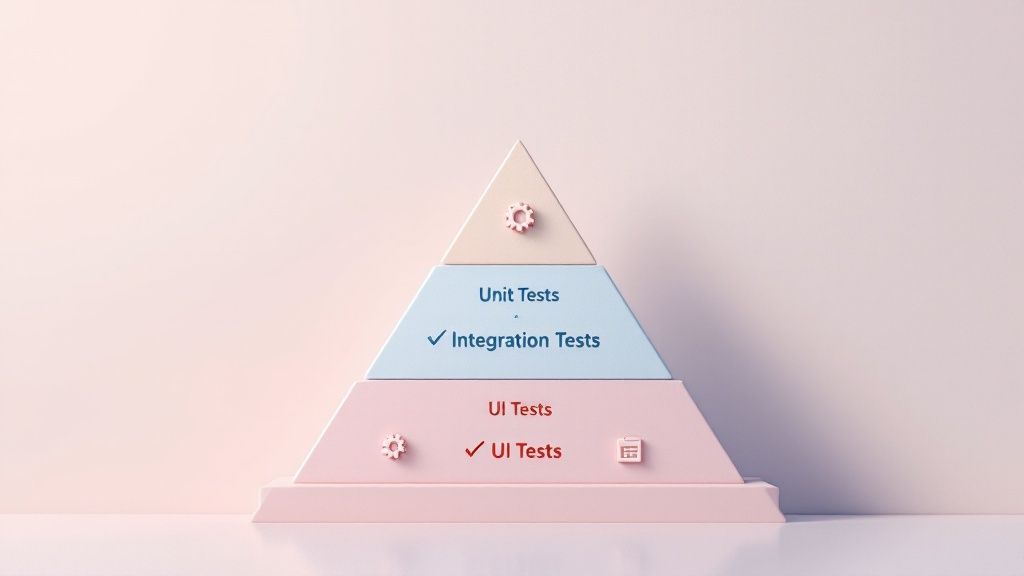

The pyramid is built on three distinct layers that represent the ideal test distribution:

- Unit Tests (Base): This is the largest and most important layer. These tests are fast, isolated, and check individual components or functions. They provide quick feedback to developers and form the stable foundation of your testing strategy.

- Integration Tests (Middle): This layer tests how different parts of the system work together, such as interactions between services or with a database. They are fewer in number than unit tests because they are slower and more complex to set up.

- UI/End-to-End Tests (Top): This is the smallest layer, reserved for testing critical user workflows from start to finish. These tests are the slowest, most expensive to maintain, and most prone to flakiness, so they should be used sparingly.

This approach ensures that most defects are caught early and cheaply at the unit level. Tech giants like Google famously follow a 70/20/10 split (70% unit, 20% integration, 10% E2E), demonstrating the model's effectiveness at scale.

How to Implement the Test Automation Pyramid

Adopting this model requires a conscious shift in testing priorities away from the UI. It encourages teams to test logic as close to the code as possible. Start by analyzing your current test suite and identifying opportunities to push tests down the pyramid.

To make the Test Automation Pyramid a key part of your software testing best practices, focus on these guidelines:

- Aim for the 70/20/10 Ratio: Use this as a general guideline to balance your test suite.

- Focus Logic at the Unit Level: The vast majority of your business logic should be verified with fast unit tests.

- Use Integration Tests for Contracts: Verify interactions between services, APIs, and data layers in the middle tier.

- Reserve UI Tests for Critical Journeys: Only automate end-to-end scenarios for essential user paths like login or checkout.

5. Move Quality Upstream with Shift-Left Testing

Shift-left testing is an approach that moves testing activities earlier in the software development lifecycle. Instead of treating testing as a final gate before release, this practice integrates it into the requirements, design, and coding phases. This proactive strategy, popularized by figures like Larry Smith and championed by the Agile and DevOps communities, focuses on preventing defects rather than just finding them late in the game. It ensures quality is a shared responsibility from day one.

The core principle is simple: the earlier a defect is found, the cheaper and easier it is to fix. By "shifting left" on the project timeline, teams can identify issues in requirements or architectural designs before a single line of code is written. This dramatically reduces the cost and effort associated with late-stage bug fixes, which often require extensive rework.

This practice fundamentally changes the role of testers, transforming them from gatekeepers to collaborative quality advisors. Companies like IBM and Microsoft have embraced shift-left testing to accelerate their DevOps transformations, while Accenture has reported reducing defect costs by as much as 40% by implementing this approach.

How to Implement Shift-Left Testing Effectively

Adopting a shift-left mindset requires a cultural change where the entire team owns quality. Start by integrating testing activities into the earliest stages of your projects, focusing on collaboration between developers, testers, and product owners.

To make shift-left a core part of your software testing best practices, focus on these tips:

- Involve Testers Early: Include QA engineers in requirement and design review meetings to identify potential ambiguities and risks.

- Use Static Analysis: Implement static code analysis tools that automatically check code for potential bugs and vulnerabilities during development.

- Train Your Developers: Equip developers with basic testing techniques and tools so they can perform thorough unit and integration tests.

- Automate in the Pipeline: Integrate automated testing directly into your CI/CD pipeline to provide fast feedback on every code change.

6. Align Teams with Behavior-Driven Development (BDD)

Behavior-Driven Development (BDD) builds on the principles of TDD but shifts the focus from writing tests to defining behavior. This collaborative approach uses a shared, natural language format to describe how an application should behave from a user's perspective. BDD, pioneered by Dan North, is designed to bridge the communication gap between business stakeholders, developers, and QA teams, ensuring everyone has a unified understanding of the requirements before any code is written.

BDD scenarios are written in a structured, plain-language format called Gherkin, which uses Given-When-Then keywords to outline a specific interaction:

- Given: Describes the initial context or precondition.

- When: Specifies an action or event performed by the user.

- Then: Details the expected outcome or result of the action.

This "executable specification" acts as both documentation and an automated test. By writing these scenarios collaboratively, teams ensure that the software being built directly aligns with business needs. Major organizations like REI and the UK Government Digital Service use BDD to test their customer-facing applications, confirming that features meet user expectations from day one.

How to Implement BDD Effectively

Adopting BDD is about fostering communication and creating shared understanding. It works best when applied to user-facing features where behavior is a key driver of success. Use popular BDD frameworks like Cucumber or SpecFlow to translate your plain-language scenarios into automated tests.

To make BDD a core part of your software testing best practices, focus on these tips:

- Involve Everyone: Collaborate with business analysts, product owners, and developers when writing scenarios to capture all perspectives.

- Focus on Behavior, Not UI: Describe what the user wants to achieve, not how they click through the interface (e.g., "When the user adds an item to their cart" instead of "When the user clicks the 'Add to Cart' button").

- Use Concrete Examples: Clarify requirements with specific examples in your scenarios to avoid ambiguity.

- Treat Scenarios as Documentation: Keep your feature files updated so they serve as reliable, living documentation for the system’s behavior.

7. Embrace Discovery with Exploratory Testing

Exploratory testing is a powerful approach that champions a tester's freedom, curiosity, and creativity over rigid, pre-scripted test cases. Popularized by testing pioneers like James Bach and Elisabeth Hendrickson, this method combines test learning, design, and execution into a simultaneous, fluid process. Testers actively explore the application, using their experience and intuition to uncover defects that scripted tests might miss. It’s an unscripted journey of discovery that reveals how the software truly behaves.

Unlike scripted testing, where the path is predetermined, exploratory testing is about adapting in real-time. The tester’s next action is governed by what they learned from the previous one, allowing them to probe deeper into unexpected behavior and complex workflows. This dynamic approach is invaluable for finding subtle, context-dependent bugs and gaining a deeper understanding of the system's strengths and weaknesses.

This method is particularly effective for complex features where user interaction can vary widely. Tech giants like Microsoft have used exploratory testing to validate the Windows user experience, while Netflix employs it to ensure its content discovery features are intuitive and bug-free. Atlassian also famously combines exploratory testing with automated checks to maintain the quality of JIRA.

How to Implement Exploratory Testing Effectively

Successful exploratory testing isn't random; it's a structured and focused investigation. It requires skill and discipline to be a core part of your software testing best practices, complementing your automated test suites perfectly.

To make exploratory testing a valuable part of your quality assurance process, focus on these tips:

- Set Time-Boxed Sessions: Allocate specific, uninterrupted blocks of time for testing (e.g., 60-90 minutes) to maintain focus and intensity.

- Use Testing Charters: Create a clear mission for each session, such as "Explore the user profile update workflow and identify any data validation issues." This provides direction without being overly restrictive.

- Document Key Findings: While you don't use scripts, you must document your journey. Take notes, capture screenshots, and record videos of interesting behaviors or defects you uncover.

- Pair with Other Approaches: Use exploratory testing to find gaps in your automated test coverage and to investigate areas where automated tests have flagged potential issues.

8. Elevate Quality with Robust Test Data Management (TDM)

Effective testing is impossible without good data. Test Data Management (TDM) is the practice of creating, managing, and maintaining high-quality data specifically for testing purposes. It ensures that testing environments have realistic, secure, and sufficient data to validate software functionality under various conditions. A solid TDM strategy prevents defects from slipping into production due to unrealistic or incomplete test scenarios.

This comprehensive approach covers everything from data creation and anonymization to subsetting and refreshing. It’s a foundational element of modern software testing best practices, ensuring that tests are both effective and compliant with data privacy regulations like GDPR. Proper TDM allows teams to simulate real-world usage accurately without exposing sensitive user information.

Leading organizations rely on sophisticated TDM to maintain quality. For instance, JPMorgan Chase uses synthetic data generation to test complex financial algorithms without using real customer data. Similarly, healthcare organizations employ data masking techniques to comply with HIPAA regulations while performing rigorous testing on patient management systems. These practices allow for thorough validation while upholding strict security and privacy standards.

How to Implement TDM Effectively

A mature TDM strategy moves beyond simply copying production data. It requires a thoughtful approach to data sourcing, governance, and maintenance. Use TDM when you need to test complex workflows, validate performance under load, or ensure compliance with privacy laws.

To integrate TDM into your quality assurance process, focus on these key tips:

- Implement Data Masking: Use tools to obfuscate or anonymize sensitive information like names, addresses, and credit card numbers from production data.

- Use Synthetic Data Generation: Create artificial, yet realistic, data to cover edge cases or when production data is scarce or too sensitive.

- Create Representative Data Subsets: Instead of using a full production database, create smaller, targeted subsets of data that accurately represent specific user scenarios.

- Automate Data Refresh Processes: Set up automated pipelines to regularly refresh your test environments with clean, relevant data to keep tests reliable.

- Establish Data Governance Policies: Define clear rules for who can access test data, how it should be used, and when it should be purged.

9. Defect Prevention and Root Cause Analysis

While finding and fixing bugs is crucial, the most effective software testing best practices aim to stop defects from happening in the first place. This proactive approach, known as Defect Prevention, shifts the focus from reactive bug squashing to proactive quality assurance. It centers on identifying the root causes of issues and implementing systemic changes to prevent their recurrence.

This philosophy is about asking "why" a defect occurred, not just "what" the defect is. By analyzing patterns, understanding process gaps, and addressing the foundational reasons for errors, teams can significantly reduce the number of bugs that enter the codebase. This method draws inspiration from quality management pioneers like W. Edwards Deming and methodologies like Six Sigma, which have been proven effective in manufacturing and are equally powerful in software development. Companies like NASA rely on rigorous defect prevention for mission-critical software where failure is not an option.

How to Implement Defect Prevention Effectively

Integrating Root Cause Analysis (RCA) into your workflow is the key to successful defect prevention. Instead of just closing a bug ticket, take the time to investigate its origins. This practice is most valuable for recurring or high-impact defects that signal a deeper problem in your development or testing processes.

To make defect prevention a core part of your software testing best practices, focus on these tips:

- Conduct Regular Defect Reviews: Hold meetings to analyze recent bugs, look for patterns, and discuss potential causes.

- Use Analysis Tools: Employ techniques like Fishbone (Ishikawa) diagrams to visually map out all potential causes of a problem.

- Track Defect Metrics: Monitor trends over time, such as defect density or defect escape rates, to identify areas for improvement.

- Share Learnings Across Teams: Ensure that insights from a root cause analysis are communicated to all developers to prevent similar mistakes. For more on improving your defect lifecycle, explore our guide on bug reporting best practices.

Best Practices Comparison Matrix

| Methodology / Practice | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|---|---|---|---|---|---|

| Test-Driven Development (TDD) | Medium – Requires discipline & cultural shift | Moderate – Developer time for tests and design | High code coverage, improved design, reduced debugging | Complex business logic requiring reliable code | Better design, regression safety, dev confidence |

| Continuous Integration & Testing | High – Setup and maintenance of pipelines and infra | High – Automated test infrastructure & build systems | Early integration issue detection, faster releases | Teams requiring frequent integration and delivery | Early issue detection, improved collaboration |

| Risk-Based Testing | Medium – Requires ongoing risk assessment | Variable – Focused testing effort based on risk | Efficient testing, prioritized risk mitigation | High-stakes apps where critical functionality must be ensured | Optimized resource use, better ROI |

| Test Automation Pyramid | Medium – Designing layered test suites | Moderate – Investment in different test levels | Fast feedback, balanced test coverage | Projects emphasizing test automation efficiency | Fast unit test feedback, reduced maintenance cost |

| Shift-Left Testing | High – Cultural change & early tester involvement | Moderate – Early testing resources & training | Lower defect cost, earlier defect detection | Organizations aiming to reduce late-stage bugs | Reduced fix cost, better requirements understanding |

| Behavior-Driven Development (BDD) | Medium – Adoption of new syntax and collaboration | Moderate – Collaboration time and tooling | Improved stakeholder communication, aligned behavior | Projects needing business-technical alignment | Clear communication, living documentation |

| Exploratory Testing | Low – Minimal setup, highly skill-dependent | Low to Moderate – Skilled testers needed | Discovery of unexpected defects, rapid feedback | Early stage, complex or poorly documented systems | Flexibility, defect discovery beyond scripts |

| Test Data Management | High – Setup for data creation, masking, governance | High – Tooling, infrastructure, and compliance | Realistic testing data, privacy compliance | Regulated industries, large data-dependent apps | Improved test reliability, compliance adherence |

| Defect Prevention & Root Cause Analysis | Medium to High – Requires process and cultural investment | Moderate – Time and analytical tooling | Reduced defect rates, improved quality processes | Organizations focused on long-term quality improvement | Lower defect rates, better team learning |

From Best Practices to Better Products

Moving from a basic testing process to a mature, quality-driven one is a journey, not a destination. The nine software testing best practices we’ve explored are not just individual tactics; they are interconnected components of a holistic strategy. They represent a fundamental shift in mindset, from viewing testing as a final gatekeeper to embedding quality into every stage of the software development lifecycle.

Embracing practices like Test-Driven Development (TDD) and Behavior-Driven Development (BDD) ensures that quality is considered from the very first line of code. Implementing a robust Test Automation Pyramid helps you focus your automation efforts where they deliver the most value, building a stable and efficient testing suite. This technical foundation is what makes continuous integration and continuous testing not just possible, but powerful.

Turning Theory into a Strategic Advantage

Adopting these methodologies is about more than just finding bugs. It's about building a predictable, efficient, and resilient development process.

- Shift-Left Testing: By moving testing activities earlier, you catch defects when they are exponentially cheaper and easier to fix. This proactive approach prevents issues from escalating into major roadblocks later in the cycle.

- Risk-Based Testing and Exploratory Testing: These practices inject critical thinking and human ingenuity into your process. They ensure you prioritize your efforts on the features that matter most to your users and business, while also uncovering the unexpected issues that automated scripts might miss.

- Defect Prevention and Root Cause Analysis: This is where your team truly learns and grows. Instead of just fixing a bug, you investigate why it happened. This discipline turns every issue into a lesson, strengthening your processes and preventing entire classes of future defects.

Ultimately, mastering these software testing best practices transforms your quality assurance from a cost center into a strategic driver of business value. It leads to faster releases, reduced development costs, and most importantly, higher customer satisfaction and loyalty. Your team spends less time fighting fires and more time innovating and delivering features that delight your users.

The Central Role of Clear Communication

A recurring theme across all these practices is the need for seamless communication and collaboration. A bug report is the primary artifact of communication between QA, development, and support teams. When that report is ambiguous or lacks crucial context, it grinds the entire resolution process to a halt. This is where the right tooling becomes a non-negotiable part of implementing best practices effectively.

Vague text descriptions like "the button didn't work" create friction and waste valuable time. To make practices like root cause analysis and continuous testing truly effective, you need bug reports that are precise, detailed, and immediately actionable. This means providing developers with a complete picture: a visual recording of the user's actions, console logs, network requests, and other essential system data. By removing the guesswork, you empower your team to reproduce, diagnose, and resolve issues with unprecedented speed and accuracy, turning a potential bottleneck into a streamlined workflow.

Ready to eliminate the back-and-forth of bug reporting and supercharge your testing workflow? Screendesk replaces ambiguous text with clear, contextual video reports that capture everything your developers need to fix bugs faster. See how you can implement these software testing best practices more effectively by visiting Screendesk today.