In the fast-paced world of customer support, ensuring quality is no longer a final-stage checkbox; it's the engine driving customer satisfaction and retention. Traditional quality assurance methods often fall short, leading to slow resolutions, frustrated customers, and overworked agents. The solution lies in adopting modern, proactive best practices for quality assurance that integrate seamlessly into your team's workflow. This approach shifts QA from a reactive, problem-finding task to a proactive, quality-building discipline.

This article breaks down 10 essential best practices, moving beyond generic advice to provide actionable strategies tailored for today's dynamic support environments. We will explore how to implement proven strategies like continuous integration, risk-based testing, and behavior-driven development to build a resilient and effective quality framework. At its core, this revolution in QA is about one fundamental goal: continuously improving customer service to guarantee top-tier interactions.

You will learn how to leverage these techniques to not only catch issues earlier but also to prevent them entirely. We'll provide specific, practical implementation details, including how to structure test automation, conduct effective root cause analysis, and manage test data securely. Get ready to transform your QA process from a necessary chore into a powerful competitive advantage that delivers exceptional customer experiences, every time.

1. Shift-Left Testing

Shift-left testing is a proactive quality assurance strategy that integrates testing much earlier in the development lifecycle. Instead of waiting until a feature or product is fully built, this approach "shifts" testing activities to the left, closer to the initial stages like requirements gathering, design, and early coding. The core idea is simple: find and fix bugs when they are smaller, simpler, and far less expensive to resolve.

This method transforms quality assurance from a final-gate inspection into a continuous, collaborative process. It's one of the most effective best practices for quality assurance because it prevents defects from being baked into the product, rather than just detecting them at the end. For customer support teams, this means fewer bug-related tickets, more stable tools, and a better overall customer experience.

Why It's a Top Practice

Adopting a shift-left model delivers significant, measurable results. It moves the focus from finding bugs to preventing them, which fundamentally improves software quality and development efficiency. This approach is not just a theory; major tech companies have proven its value. For example, Microsoft's implementation of shift-left principles led to a 20-25% reduction in post-release defects. Similarly, IBM reported a 40% reduction in testing costs after shifting left, demonstrating its powerful financial benefits.

Actionable Tips for Implementation

Getting started with shift-left testing doesn't require a complete overhaul. You can introduce these practices incrementally:

- Involve QA Early: Invite QA engineers to requirements review and design meetings. Their unique perspective can help identify potential issues, ambiguities, and edge cases before a single line of code is written.

- Automate Unit Tests: Encourage developers to write automated unit tests for their code. These tests form the foundation of a shift-left strategy by catching bugs at the most granular level.

- Implement Peer Code Reviews: Establish a formal code review process with a quality-focused checklist. This ensures another set of eyes validates code for logic, style, and potential flaws.

- Use Static Analysis Tools: Integrate static analysis tools into your development pipeline. These tools automatically scan code for common vulnerabilities, bugs, and "code smells" without needing to execute the program.

2. Risk-Based Testing

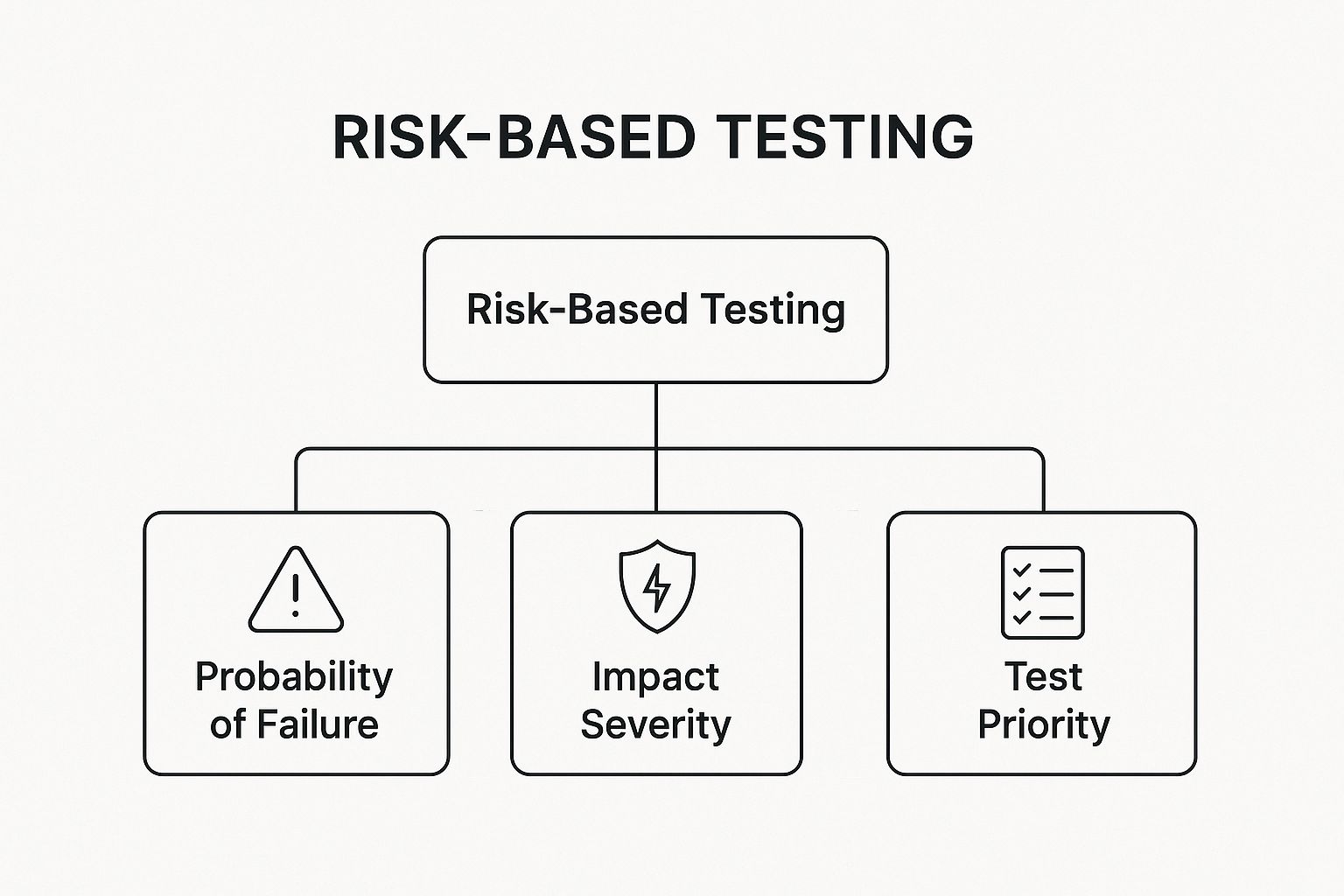

Risk-based testing is a strategic QA approach that prioritizes testing efforts based on the probability and impact of potential failures. Instead of trying to test everything equally, this method allocates the most resources to features where a bug would cause the most damage to the business or the user. It’s about being smart with your time and focusing on what truly matters.

This strategy is one of the best practices for quality assurance because it directly aligns testing with business objectives. It ensures that the most critical functionalities, like payment processing in an e-commerce app or patient data security in healthcare software, receive the most rigorous examination. For customer support, this translates to fewer high-severity incidents and more stable, reliable software for users.

The diagram below illustrates the core hierarchy of risk-based testing, showing how the combination of failure probability and impact severity determines the final test priority.

This visualization highlights that test priority isn't arbitrary; it's a calculated decision derived from a careful analysis of potential business and user risks.

Why It's a Top Practice

Adopting risk-based testing allows teams to optimize their test coverage and achieve maximum effectiveness with limited resources. Popularized by experts like Rex Black and codified in standards like ISO 29119, this approach provides a defensible rationale for testing decisions. Banking software, for example, uses it to focus intensely on transaction processing and security, while an e-commerce platform would prioritize its payment gateway and inventory management systems, mitigating the biggest financial and operational risks first.

Actionable Tips for Implementation

You can integrate risk-based testing into your workflow by taking these focused steps:

- Involve Business Stakeholders: Conduct risk identification workshops with product managers, developers, and business analysts. Their input is crucial for accurately assessing the business impact of potential failures.

- Use Historical Data: Analyze past bug reports and support tickets to identify recurring problem areas. This data provides an objective measure of which features have a higher probability of failure.

- Create a Risk Matrix: Develop a simple risk matrix or heat map that plots probability against impact. This visual tool helps stakeholders quickly understand and agree on test priorities.

- Document and Revisit: Keep a record of your risk assessments and the resulting test strategies. Revisit this documentation regularly, especially when new features are added or business priorities change.

3. Test Automation Pyramid

The Test Automation Pyramid is a strategic framework that guides how to balance and distribute automated tests across different application layers. Popularized by experts like Mike Cohn and Martin Fowler, it advises building a large base of fast unit tests, a smaller layer of integration tests, and a minimal number of slow, brittle UI tests at the top. This structure creates a stable, efficient, and maintainable testing suite.

This model is one of the foundational best practices for quality assurance because it optimizes test feedback loops and reduces long-term maintenance costs. For customer support, a well-structured test pyramid means fewer bugs escape to production, resulting in more reliable tools and a significant drop in customer-reported issues related to core functionality.

Why It's a Top Practice

Adopting the Test Automation Pyramid prevents the "ice-cream cone" anti-pattern, where teams rely heavily on slow, expensive UI tests. This approach leads to faster builds, quicker bug detection, and more reliable test results. Google famously follows this model, often aiming for a 70% unit, 20% integration, and 10% UI test distribution. Similarly, Spotify leverages this strategy to effectively test its complex microservices architecture, ensuring individual services are robust before integrating them. The pyramid provides a clear roadmap for creating a cost-effective and highly effective automation strategy.

Actionable Tips for Implementation

Implementing the pyramid requires a disciplined approach to test creation. You can get started with these focused steps:

- Build a Strong Foundation: Prioritize creating a comprehensive suite of unit tests. These should be the first tests written for any new feature, as they are fast to run and pinpoint failures precisely.

- Focus Integration on Boundaries: Use integration tests to verify interactions between services, modules, or with external systems like databases. Focus only on these critical connection points.

- Limit UI Tests: Reserve UI tests for critical, end-to-end user journeys, such as the login process or the checkout flow. These are the most valuable but also the most fragile and expensive tests to maintain.

- Monitor Your Pyramid: Regularly analyze your test suite metrics to ensure you are maintaining the desired pyramid shape. Track execution times and failure rates at each level to identify imbalances.

4. Continuous Integration and Continuous Testing

Continuous Integration (CI) and Continuous Testing (CT) work together to create an automated, self-validating development pipeline. In this model, every time a developer commits a code change, it automatically triggers a build and a series of tests. This immediate feedback loop ensures that new code integrates smoothly and doesn't introduce regressions, catching bugs within minutes of being written.

This combination fundamentally accelerates development while maintaining high standards. It is one of the definitive best practices for quality assurance because it transforms testing from a separate, manual phase into an integral, automated part of the development workflow. For customer support teams, this translates to a more stable product, fewer unexpected issues after a release, and confidence that the software is constantly being verified for quality.

Why It's a Top Practice

Adopting a CI/CT pipeline provides instant validation and dramatically reduces integration risks. Instead of discovering major conflicts days or weeks late, teams find and fix them immediately. This approach is central to modern, high-velocity software development. For instance, Etsy implemented a CI/CT culture that enabled it to deploy code over 50 times per day with confidence. Similarly, Facebook's robust pipeline handles thousands of commits daily, ensuring new features are tested and integrated seamlessly without disrupting the user experience.

Actionable Tips for Implementation

Building a CI/CT pipeline can be done incrementally. You don't need a perfect system on day one.

- Start with a Simple CI Setup: Use tools like Jenkins, GitLab CI, or GitHub Actions to automate your first build and run a small set of critical tests.

- Keep Feedback Loops Short: Ensure your primary test suite runs in under 10 minutes. Quick feedback is essential for developers to stay productive and fix issues while the context is fresh.

- Parallelize Your Tests: As your test suite grows, use parallel execution to run multiple tests simultaneously. This drastically reduces the total pipeline runtime.

- Monitor Pipeline Performance: Regularly review your CI/CT pipeline's health and speed. Optimizing slow tests and removing flaky ones is crucial for maintaining an efficient workflow. For more insights on this topic, you can learn more about the benefits of process automation on blog.screendesk.io.

5. Behavior-Driven Development (BDD)

Behavior-Driven Development (BDD) is a collaborative quality assurance approach that bridges the communication gap between technical developers, QA testers, and non-technical business stakeholders. It uses natural, human-readable language to describe how an application should behave from a user's perspective. The goal is to create a shared understanding of what needs to be built before development even begins, ensuring the final product meets everyone's expectations.

This method centers on writing "feature files" that outline user scenarios in a structured Given-When-Then format. This makes it one of the top best practices for quality assurance because it aligns development with business requirements and user needs. For customer support teams, this clarity means features are built correctly the first time, reducing confusion, user error, and support ticket volume related to misunderstood functionality.

Why It's a Top Practice

Adopting BDD fosters clear communication and ensures that software development is guided by concrete, desired user behaviors rather than abstract technical specifications. This alignment prevents costly rework and misinterpretations down the line. BDD's value is proven by its adoption across major organizations. For instance, the BBC successfully used BDD to develop its iPlayer platform, ensuring the complex system met diverse user expectations. Likewise, the UK's Government Digital Service relies on BDD to build accessible and user-centric public services, proving its effectiveness in large-scale, high-stakes projects.

Actionable Tips for Implementation

Integrating BDD into your workflow can be done systematically to maximize its collaborative benefits:

- Start with High-Value Features: Begin by applying BDD to a critical or complex user-facing feature. This allows the team to learn the process on a component where shared understanding is most crucial.

- Involve Product Owners and Support: Actively involve product owners, business analysts, and even customer support leads in writing and reviewing BDD scenarios. Their input ensures the scenarios reflect real-world user behavior and business goals.

- Keep Scenarios Focused: Each scenario should test one specific behavior. Avoid cramming multiple "When-Then" pairs into a single scenario, which can make it confusing and hard to maintain.

- Use a "Background" Step for Repetition: For steps that are repeated in every scenario within a feature file (like a user being logged in), use the "Background" keyword to define them once. This reduces duplication and keeps feature files clean.

6. Exploratory Testing

Exploratory testing is an unscripted, human-centric approach to quality assurance where the tester's learning, test design, and execution happen simultaneously. Instead of following rigid, pre-written test cases, testers actively investigate the application, using their creativity and critical thinking to uncover defects that automated or scripted tests often miss. It treats testing as a cognitive activity, not just a procedural one.

This method is one of the most powerful best practices for quality assurance because it mirrors how real users interact with software: unpredictably and intuitively. For customer support teams, insights from exploratory testing are invaluable, as they often reveal usability quirks, confusing workflows, and edge-case bugs that lead to frustrated customers and support tickets.

Why It's a Top Practice

Exploratory testing excels at finding complex, non-obvious defects by leveraging human intelligence. While automated scripts validate expected outcomes, exploratory testing uncovers unexpected system behaviors. Its value is proven by industry leaders; Microsoft has long used exploratory testing to refine the usability of its Windows operating system. Similarly, Atlassian holds dedicated exploratory testing sessions to improve products like JIRA and Confluence, ensuring they handle real-world scenarios gracefully. This approach, pioneered by thinkers like James Bach, adds a crucial layer of intelligent investigation to the QA process.

Actionable Tips for Implementation

Introducing exploratory testing is straightforward and can be done without formal tooling. You can structure your efforts with these tips:

- Use Time-Boxed Sessions: Conduct focused testing sessions lasting 90-120 minutes. This structure maintains intensity and prevents tester burnout while ensuring clear start and end points.

- Create Clear Charters: Guide each session with a clear charter or mission. For example, a charter could be: "Explore the new checkout process and identify any potential points of user confusion or payment failure."

- Take Detailed Notes: Document observations, take screenshots, and record videos during sessions. This evidence is critical for creating clear, reproducible bug reports for the development team.

- Pair Up for Better Results: Have a tester pair with another tester or a developer. This collaborative approach, known as pair testing, fosters new ideas and provides immediate feedback on findings.

- Focus on High-Risk Areas: Prioritize exploration on parts of the application that have recently changed or are known to be complex and error-prone.

7. Defect Prevention and Root Cause Analysis

Defect prevention is a fundamental quality assurance strategy that shifts focus from simply finding and fixing bugs to systematically eliminating their root causes. Instead of reacting to problems after they appear, this approach proactively analyzes defect patterns, refines development processes, and implements safeguards to stop similar issues from ever happening again. The goal is to move beyond a reactive cycle of "detect and correct" to a proactive state of "prevent and perfect."

This method is one of the most impactful best practices for quality assurance because it addresses the source of problems, not just the symptoms. For customer support teams, this translates directly to a lower volume of recurring issues and more predictable software behavior. By preventing defects, organizations ensure a higher quality product, which reduces customer frustration and support workload.

Why It's a Top Practice

Adopting defect prevention and root cause analysis creates a powerful feedback loop for continuous improvement. Rather than treating each bug as an isolated incident, it treats them as learning opportunities to strengthen the entire development lifecycle. This philosophy has been proven effective by industry leaders. For example, Toyota's lean manufacturing principles, when applied to software, drastically reduce waste and errors. Similarly, Motorola’s Six Sigma implementation in its software processes led to a measurable decrease in defect rates by focusing on process control and root cause elimination. This approach builds a culture of quality from the ground up.

Actionable Tips for Implementation

Integrating defect prevention doesn't have to be complex. You can begin by introducing these targeted activities into your workflow:

- Use Fishbone Diagrams: When a significant defect is found, use a fishbone (or Ishikawa) diagram to visually map out all potential causes related to people, processes, tools, and technology. This helps teams identify the true root cause rather than the most obvious symptom.

- Hold Regular Defect Triage Meetings: Establish a routine meeting where development, QA, and support teams review recent defects. The focus should be on understanding why the bugs occurred and brainstorming preventive actions.

- Track Prevention Metrics: Monitor metrics like defect density, the percentage of recurring bugs, and the cost of rework. These data points will demonstrate the effectiveness of your prevention efforts and highlight areas needing more attention. For more information, you can explore established quality assurance procedures on blog.screendesk.io.

- Share Lessons Learned: Create a centralized knowledge base or hold cross-team "post-mortem" sessions to share insights from root cause analyses. This ensures one team's lesson becomes a preventative measure for the entire organization.

8. Test Data Management

Test data management (TDM) is the systematic practice of planning, designing, storing, and managing the data required for software testing. The goal is to provide testers and developers with realistic, secure, and compliant data that accurately mirrors production environments. This process involves creating high-quality datasets using techniques like data masking to hide sensitive information, synthetic data generation to create artificial records, and data subsetting to use a smaller, manageable portion of production data.

Effective TDM is one of the most critical best practices for quality assurance because poor data can lead to missed bugs, flawed performance tests, and security vulnerabilities. For customer support teams, robust test data ensures that the scenarios they replicate are true to life, leading to more accurate troubleshooting and a better understanding of potential user issues before they escalate. It prevents the classic "it works on my machine" problem by standardizing the data environment.

Why It's a Top Practice

Implementing a strong TDM strategy directly impacts the reliability and security of testing. It allows teams to test thoroughly without exposing real customer information, which is crucial for compliance with regulations like GDPR and HIPAA. Leading data solution providers like Informatica and Broadcom have pioneered TDM tools that demonstrate its value. For instance, a major bank can use masked customer data to rigorously test a new payment feature, ensuring functionality without breaching privacy. Similarly, a healthcare provider can generate synthetic patient data to verify its systems are HIPAA-compliant.

Actionable Tips for Implementation

You can build a solid TDM foundation by integrating these practical steps into your QA workflow:

- Implement Data Masking Early: Introduce data masking and anonymization processes at the beginning of the development cycle. This ensures sensitive data is never exposed in non-production environments.

- Create Reusable Data Templates: Develop and store templates of test data for common scenarios, such as new user registration or failed payment attempts. This speeds up test setup and ensures consistency.

- Automate Data Refresh Processes: Set up automated jobs to refresh test environments with clean, up-to-date data. This prevents data staleness and ensures tests remain relevant and reliable.

- Establish Clear Data Policies: Define and enforce policies for data retention and cleanup in test environments. This practice minimizes storage costs and reduces the risk of old data causing test failures.

9. Performance Testing Integration

Performance testing integration involves weaving performance validation throughout the entire development lifecycle, rather than treating it as a one-time check before launch. This approach incorporates load, stress, and endurance testing directly into the continuous integration and delivery (CI/CD) pipeline. By doing so, teams ensure that the application consistently meets performance standards with every new code change or feature release.

This proactive method is one of the most crucial best practices for quality assurance, as it prevents performance degradation from creeping into the product. For customer support teams, this translates to a fast, responsive application, fewer complaints about slowness or crashes during peak times, and higher customer satisfaction. It ensures the system can handle real-world user traffic without failing.

Why It's a Top Practice

Integrating performance testing from the start prevents last-minute disasters and costly architectural changes. It shifts performance from an afterthought to a core feature, guaranteeing a reliable user experience. Netflix is a prime example; its continuous performance testing and Chaos Engineering principles ensure its streaming service remains stable for millions of users, even under extreme conditions. Similarly, LinkedIn integrates performance validation to ensure its platform features remain fast and responsive as they evolve.

Actionable Tips for Implementation

You can begin integrating performance testing without disrupting your entire workflow. Start small and build momentum with these steps:

- Establish Performance Baselines: Define acceptable performance metrics, like response times and error rates, under normal load. This "performance budget" becomes the benchmark for all future tests.

- Start with Simple Load Tests: Begin by creating basic automated load tests that simulate a realistic number of users. Tools like JMeter or k6 can be integrated into your CI/CD pipeline to run these tests automatically.

- Use Production-Like Environments: Your testing environment should mirror the production setup as closely as possible. This ensures that test results are accurate and relevant to real-world performance.

- Create Performance Scripts with Functional Tests: As developers write functional tests for new features, encourage them to create corresponding performance test scripts. This makes performance a shared responsibility from day one.

10. Cross-Functional Quality Teams

Cross-functional quality teams dismantle the traditional siloed approach where QA operates separately from development. This modern practice involves embedding QA professionals directly into development teams, fostering a culture of shared ownership for quality. Team members from diverse disciplines, including developers, testers, product owners, and designers, collaborate closely from the project's inception.

This model is one of the most impactful best practices for quality assurance because it ensures quality is not an afterthought but an integral part of the development process. For customer support teams, this translates into fewer product escalations and a more reliable product, as quality is collaboratively built-in rather than inspected at the end. Effective quality assurance often relies on collaboration across departments. To foster these partnerships, consider proven strategies for managing cross-functional teams to align goals and enhance communication.

Why It's a Top Practice

Integrating quality experts into development squads accelerates feedback loops and enhances problem-solving. It moves quality from a "department" to a "team responsibility," leading to higher-quality software and faster delivery cycles. This structure is a cornerstone of successful Agile and DevOps transformations. For example, Spotify’s renowned "squad" model embeds quality assistance roles within autonomous teams, enabling them to build, test, and release features independently and with high confidence. Similarly, banking giant ING successfully transitioned to cross-functional "tribes," which helped break down barriers and improve product stability.

Actionable Tips for Implementation

Transitioning to a cross-functional model can be done systematically to ensure a smooth adoption:

- Define Clear Quality Roles: Establish specific responsibilities for embedded QA members. Their role should shift from sole gatekeeper to quality coach, mentor, and advocate within the team.

- Provide Cross-Training: Encourage developers to learn testing techniques and testers to understand development principles. This cross-pollination of skills builds a more resilient and versatile team.

- Establish Shared Goals: Align the entire team around common quality metrics, such as bug escape rates or customer satisfaction scores. This ensures everyone is working toward the same definition of "done."

- Create Communities of Practice: Form groups for QA professionals across different teams to share knowledge, discuss challenges, and standardize best practices, preventing knowledge silos from forming.

Best Practices Quality Assurance Comparison

| Approach | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|---|---|---|---|---|---|

| Shift-Left Testing | Medium (needs cultural shift, setup time) | Medium (automation tools, training) | Early defect detection, improved quality | Projects aiming for early bug fixes and faster delivery | Reduces bug fix cost, improves collaboration |

| Risk-Based Testing | Medium-High (expertise in risk assessment) | Low-Medium (risk analysis, stakeholder input) | Focused testing on high-risk areas | Critical systems requiring prioritized testing | Optimizes resources, reduces project risk |

| Test Automation Pyramid | Medium (testing discipline required) | Medium (test tools, maintenance effort) | Faster feedback, scalable, reliable tests | Teams emphasizing automated testing efficiency | Fast test cycles, lower maintenance costs |

| Continuous Integration & Testing | High (complex CI/CD pipeline setup) | High (test infrastructure, maintenance) | Immediate feedback, higher deployment velocity | Continuous delivery environments | Rapid feedback, reduces integration problems |

| Behavior-Driven Development (BDD) | Medium-High (requires training & culture change) | Medium (collaborative process, tools) | Shared understanding, living documentation | Cross-functional teams with focus on business value | Improves communication, reduces ambiguity |

| Exploratory Testing | Low-Medium (depends on tester skills) | Low (minimal tooling) | Finds unexpected defects, quick adaptation | Testing new features, usability, or complex systems | Detects edge cases, enhances tester creativity |

| Defect Prevention & Root Cause Analysis | Medium-High (systematic analysis needed) | Medium (time investment, process change) | Lower defect density long-term, improved process | Organizations focused on maturity and quality improvement | Reduces defects, enhances learning |

| Test Data Management | Medium-High (complex data setup) | Medium-High (storage, masking tools) | Reliable, compliant test data | Projects with sensitive data or complex environments | Ensures compliance, improves test accuracy |

| Performance Testing Integration | High (specialized tools and infrastructure) | High (infrastructure and expertise) | Early detection of performance issues | Systems with strict performance requirements | Prevents production issues, data-driven planning |

| Cross-Functional Quality Teams | High (organizational and cultural change) | Medium (training, cross-functional roles) | Shared quality ownership, faster feedback | Agile/DevOps teams emphasizing collaboration | Increases quality ownership, reduces delays |

Building a Future-Proof Quality Culture

The journey toward exceptional quality assurance is not a destination; it is a continuous, evolving process. Navigating through the ten best practices for quality assurance outlined in this article, from shifting left to embracing cross-functional teams, can seem like a monumental task. However, the goal is not to implement every single strategy overnight. The real objective is to cultivate a deep-seated culture of quality that permeates every aspect of your customer support and development lifecycles.

Think of these practices not as rigid rules, but as a flexible framework for transformation. By integrating even a few of these powerful concepts, you begin to fundamentally change your team’s relationship with quality. You move from a reactive state of "firefighting" customer issues to a proactive state of anticipating needs and preventing defects before they ever reach your user base. This shift is the cornerstone of building a resilient, high-performing support operation.

Key Takeaways and Actionable Next Steps

To make this transition manageable, it's crucial to focus on incremental progress and tangible results. Here is a practical roadmap to get started:

-

Start Small, Win Big: Choose one or two practices that address your most significant pain points. If your team is buried in repetitive regression tests, exploring the Test Automation Pyramid might be your best first step. If communication gaps between support and development are causing delays, implementing Behavior-Driven Development (BDD) principles can bridge that divide with a shared language.

-

Measure What Matters: You cannot improve what you do not measure. Establish clear, simple metrics to track the impact of your new initiatives. This could be a reduction in bug resolution time, a decrease in ticket volume for recurring issues, or an increase in your Customer Satisfaction (CSAT) score. These data points provide the proof needed to build momentum and justify further investment in quality.

-

Empower Your People: Quality is everyone's responsibility, but your team needs the right tools and training to succeed. Equip them with modern solutions that simplify complex processes. For instance, facilitating clear, unambiguous communication for defect reporting is critical. Adopting video-based tools can dramatically improve the clarity of bug reports, replacing long, confusing text descriptions with precise visual evidence.

Core Insight: True quality assurance isn't about adding another layer of testing at the end of a process. It’s about weaving quality into the very fabric of how your team thinks, communicates, and operates from the very beginning.

The Lasting Impact of a Quality-Centric Mindset

Mastering these best practices for quality assurance delivers benefits that extend far beyond a cleaner bug queue. When your organization commits to a culture of quality, you create a positive feedback loop. Developers receive clearer feedback, enabling them to build better products. The customer support team spends less time on frustrating, repetitive problems and more time delivering high-value, proactive assistance.

Ultimately, your customers feel the difference. They experience a more stable, reliable product and receive faster, more effective support when they need it. This fosters loyalty, increases customer lifetime value, and transforms your support team from a cost center into a powerful driver of business growth and brand reputation. The path forward is clear: invest in quality, empower your team, and build a customer experience that sets you apart from the competition.

Ready to supercharge your team's bug reporting and feedback loops? See how Screendesk can help you implement key quality assurance best practices by replacing endless text with clear, actionable screen recordings. Get started with Screendesk today and empower your team to communicate with perfect clarity.